Table of Contents >> Show >> Hide

- The Big Takeaway: Identity Is Now the Blast Radius

- ID Breaches: When Compliance Data Becomes Criminal Gold

- Code Smell Is Still a Security Smell

- Why “Poetic Flows” Is More Than a Cute Phrase

- What Security Teams Should Actually Do on Monday

- The Human Cost Behind the Headlines

- Experience Section: What a Week Like This Feels Like From the Front Row

- Conclusion

Note: This article is written for web publication, in standard American English, and intentionally removes stray citation artifacts or source-code clutter.

Some security weeks are neat little filing cabinets. A ransomware story goes here, a patch story goes there, and everyone pretends the internet is basically an orderly office with occasional smoke. Then there are weeks like this one, where everything spills into everything else. Identity data leaks into compliance debates. Old-school code smell turns into very modern remote code execution. A malicious app gets blessed with OAuth, walks through the front door, and politely steals the furniture. Meanwhile, exploit chains read less like bug reports and more like spoken-word performances written by somebody who really, really hates defenders.

That is the theme of this week in security: identity is becoming the favorite shortcut, code quality still predicts security quality, and attackers are chaining tiny flaws together with almost artistic rhythm. It is not pretty, exactly. But it is poetic in the way a thunderstorm is poetic: dramatic, layered, and likely to ruin your weekend plans.

The Big Takeaway: Identity Is Now the Blast Radius

For years, security teams liked to separate “breach prevention” from “identity management,” as if one team guarded the walls and the other team handed out badges in the lobby. That division looks increasingly silly. The modern breach is often an identity story first and a malware story second. Credentials, tokens, connected apps, support accounts, contractor access, and session artifacts are now the keys to the kingdom. Attackers no longer need to kick down the front door if they can persuade someone to open it, label it “approved,” and even hold it for them with a smile.

That pattern keeps showing up in the numbers too. Security reporting and official advisories now point in the same direction: identity abuse is not a side quest. It is the main storyline. When cloud and SaaS incidents are increasingly rooted in identity compromise, the old fantasy that “we turned on MFA, therefore we are done here” starts to look like a joke with a very expensive punchline.

ID Breaches: When Compliance Data Becomes Criminal Gold

Discord and the ugly value of “just a few ID images”

The Discord incident is the kind of story that makes privacy advocates inhale sharply through their teeth. Discord said an unauthorized party targeted third-party customer support services and accessed user data, including a small number of government-ID images. Attackers, meanwhile, claimed the breach was much larger and said they had access through a compromised contractor account tied to a Zendesk environment. Even where attacker claims remain unverified, the confirmed lesson is already clear enough: support systems have become treasure chests, and anything stored there is fair game once a downstream account gets popped.

What makes this particularly grim is the kind of data involved. A leaked password is bad. A leaked government ID image is bad in a way that lingers. It can feed fraud, account takeover, synthetic identity abuse, and the sort of bureaucratic nightmare that makes victims spend months proving they are, in fact, themselves. That is the part many executives still miss. The most damaging breach artifact is not always the most technical one. Sometimes it is a scanned document that was collected for a perfectly lawful, perfectly reasonable, perfectly terrible reason.

And that brings us to the compliance trap. As age assurance and identity checks spread across the web, more platforms are being pushed toward collecting or processing proof-of-age data. In theory, this is supposed to make online spaces safer. In practice, it also increases the concentration of highly sensitive identity material in places that were never designed to be little vaults of human paperwork. If a platform starts storing IDs, even indirectly through vendors or support workflows, it is no longer just a chat app, content platform, or customer service desk. It is now a de facto identity warehouse, whether it likes the label or not.

Salesforce and the front door nobody locked

If Discord was the week’s reminder that third parties matter, Salesforce was the reminder that human trust is still the most dangerous API in the building. Federal guidance and incident reporting described campaigns in which threat actors used voice phishing and malicious connected apps to compromise Salesforce environments for data theft and extortion. The trick was not dazzling malware. It was persuasion. Attackers convinced victims to authorize a malicious connected app, often a modified version of Salesforce Data Loader, which then received legitimate OAuth-backed access.

This is the kind of attack that makes defenders want to flip a table because it bypasses the controls people love to brag about. If the bad app is “approved,” then the logs can look respectable. If the token is issued by the platform itself, some monitoring pipelines shrug and move on. If a user was socially engineered into granting access, the breach report may still read like a software problem when the initial failure was really procedural trust wearing a fake mustache.

There is also a business lesson here. Extortion groups do not need every victim to be equally compromised. They just need enough data from enough environments to create panic, threaten publication, and force a decision. The week’s reporting suggested claims tied to dozens of affected organizations and linked part of the campaign to vishing-led connected-app abuse. That means the identity perimeter is no longer only about employees logging in. It is also about what employees can authorize, what integrations inherit, and what “trusted” software is allowed to quietly siphon away through the back channel.

Code Smell Is Still a Security Smell

The phrase “code smell” can sound cute, like a software engineer wrinkling their nose at awkward style. In security, it is rarely cute. It is usually the odor of future incident response. Bad assumptions, unescaped variables, weak trust boundaries, permissive loaders, and ancient convenience hacks do not stay cosmetic for long. They become the wormholes attackers use to travel from “that seems odd” to “who authorized the ransom bridge call?”

Unity: the danger of “it probably won’t be exploited”

Unity’s CVE-2025-59489 is a beautiful example of why local-only arguments should never be used as a sedative. Unity said affected applications built with vulnerable editor versions were susceptible to unsafe file loading and local file inclusion, with a CVSS score of 8.4, though it also noted there was no evidence of exploitation. The underlying issue involved command-line argument handling that could allow unintended library loading. Research showed that malicious intents could influence Unity applications and, in some scenarios, enable arbitrary code execution.

The funny thing about bugs like this is that they often begin life in a meeting as “narrow,” “unlikely,” or “platform-specific.” Then someone curious comes along, follows the edge cases, and discovers that narrow does not mean harmless. It means specialized. That is different. In security, “specialized” often translates to “will be used by the first person patient enough to read the docs and ruin your afternoon.”

Unity deserves credit for shipping fixes and not pretending the problem was imaginary. But the broader lesson is bigger than one engine. Modern software stacks are full of helper features designed for debugging, interoperability, and ease of deployment. Those conveniences become liabilities when untrusted input can steer them. The internet has an endless supply of attackers willing to click the “developer convenience” button until it dispenses shells.

Dell UnityVSA and the immortal bad idea of shell execution

If the Unity story is about trust in arguments, the Dell UnityVSA story is about trust in strings. WatchTowr’s write-up on the UnityVSA pre-auth command-injection bug was the sort of research that makes experienced engineers laugh for exactly one second before going quiet. The vulnerable path concatenated raw URI input into an $exec_cmd variable and then executed it. That is not “subtle code smell.” That is “a gas leak wearing a name tag.”

To be fair, security history is basically one long museum of string handling gone wrong. But this case is still useful because it illustrates a recurring truth: old mistakes remain operationally relevant. Fancy dashboards, polished branding, enterprise UI chrome, and storage-appliance seriousness do not magically neutralize a command injection bug. A premium product can still hide a very discount problem underneath.

The real value of stories like this is not just that they identify a single flaw. They remind teams to audit patterns. Anywhere software takes external input, transforms it into a shell command, and says “what could possibly go wrong,” you are staring at a vulnerability family, not just one CVE.

Oracle E-Business Suite and the attack chain as performance art

Then we arrive at Oracle E-Business Suite, where the week’s most elegant horror story lived. Oracle warned that CVE-2025-61882 was remotely exploitable without authentication and could lead to remote code execution. Security researchers then walked defenders through the exploit chain in a way that felt equal parts incident analysis and magic trick explained in slow motion.

The chain reportedly combined SSRF, CRLF injection, connection reuse, path traversal through a /help/../ pattern, and unsafe XSL processing to reach code execution. Each individual weakness sounds manageable in isolation. Together, they become one of those attack stories that makes security teams mutter, “Of course it was a chain.” Because it usually is. The breakthrough is rarely one cinematic bug. It is five ordinary mistakes standing on each other’s shoulders in a trench coat.

This is what I mean by poetic flows. Attackers increasingly think compositionally. They do not need a perfect primitive if they can sequence imperfect ones. They move from request smuggling to auth bypass to parser abuse the way good musicians move through chord changes: with timing, memory, and a little flair. Defenders still too often organize tools around single-bug categories, while attackers are out here writing mashups.

Why “Poetic Flows” Is More Than a Cute Phrase

Security people have a habit of using dry language for deeply weird realities. “Kill chain.” “Initial access vector.” “Privilege escalation.” These are accurate, but they can hide how creative attackers actually are. This week’s incidents show that modern compromise often has rhythm. The attacker studies the system’s assumptions, finds the line breaks, leans on trusted workflows, and turns normal behavior into malicious sequence.

That idea also echoes one of the oldest and smartest warnings in computing: Ken Thompson’s classic “Reflections on Trusting Trust.” The core question is timeless. How much can you trust a system simply because the obvious parts look clean? If the toolchain, platform, support vendor, or authorized app is already carrying hidden assumptions, the compromise may arrive dressed as normality. That is why “poetic flow” matters. It captures the uncomfortable beauty of chained compromise: the exploit succeeds not by brute force alone, but by understanding the story the system tells itself and then editing the ending.

What Security Teams Should Actually Do on Monday

First, treat identity stores like hazardous material, not office furniture. If your platform, vendor, or support workflow touches government IDs, age-verification artifacts, or other durable identity documents, reduce retention, segment access, and audit every downstream processor. “We only keep it for compliance” is not a defense strategy. It is a future deposition.

Second, review connected apps and delegated access with the same seriousness once reserved for administrator accounts. OAuth is not a side feature. It is an access plane. If users can approve an app that can query or export data, that workflow belongs inside your threat model, your training program, and your detection engineering.

Third, hunt for smell, not just signatures. Search for unescaped command construction, unsafe deserialization, weird loader behavior, permissive path handling, and parser features nobody fully understands anymore. Vulnerability scanners are useful. So is reading your own code like a slightly malicious person with insomnia.

Fourth, assume exploit chains, not isolated bugs. If you find an SSRF, ask what internal service it can reach. If you find a weak parser, ask what auth rules can be sidestepped on the way there. If you find a harmless-looking helper endpoint, assume somebody else is already trying to make it unforgettable.

Finally, stop measuring security maturity only by prevention. Forensics, logging, revocation, app governance, contractor controls, and data minimization matter because compromise now often arrives through “approved” mechanisms. If the breach uses your own workflows against you, visibility is not a luxury. It is the difference between a contained incident and a six-month public autopsy.

The Human Cost Behind the Headlines

Weekly security coverage can make it all feel abstract, like a scoreboard for broken software. It is not. The Identity Theft Resource Center said it tracked 3,322 data compromises in 2025, a record, and noted that 80% of surveyed consumers had received at least one breach notice in the previous 12 months. That means identity compromise is not some niche problem affecting only unlucky enterprises with dramatic logos. It is ambient. It is routine. It is woven into ordinary life now.

That is why stories involving IDs, support systems, and connected apps matter so much. When an organization loses source code, that is bad. When it loses the data people use to prove who they are, that becomes a social problem, a financial problem, and often a psychological one. Breach fatigue has made many people numb, but numbness is not resilience. It is just exhaustion wearing office clothes.

Experience Section: What a Week Like This Feels Like From the Front Row

If you spend enough time reading security news, a week like this starts to feel strangely familiar in the worst possible way. The names change. The logos change. The bug classes rotate in and out like seasonal drinks. But the emotional shape of it stays the same. First comes the headline that sounds specific: a support contractor account, a connected app, a command injection, a quietly terrifying parser chain. Then comes the sinking realization that the details are different, but the assumptions underneath are identical. Too much trust. Too much access. Too much data kept around because deleting things is less glamorous than collecting them.

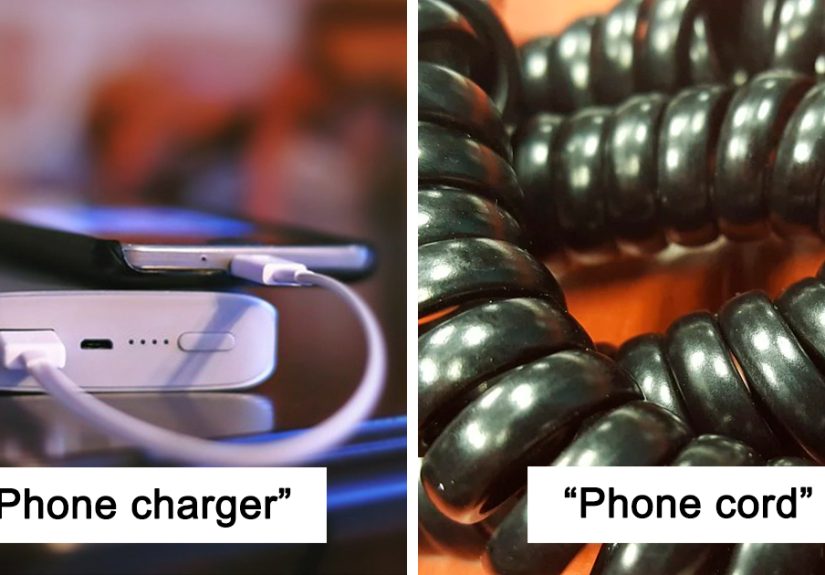

There is also a peculiar kind of whiplash in how these stories land. One minute you are reading about government IDs exposed through a customer support system, which feels like the digital equivalent of leaving your passport in a food court. The next minute you are knee-deep in a write-up about CRLF injection and XSLT-driven code execution, which sounds like a graduate seminar taught by a storm cloud. Then you jump over to a social-engineering campaign where attackers simply talked people into approving the wrong app, and suddenly the most advanced exploit in the room is a phone call.

That is the real experience of modern security: technical sophistication and human predictability colliding at speed. The defenders are often doing real work. Vendors patch. researchers disclose responsibly. advisories get published. incident responders drink heroic amounts of coffee. And yet the same broad truths keep resurfacing. Anything that can be over-trusted will be over-trusted. Anything that can quietly inherit permission will quietly inherit permission. Anything that smells slightly off in the codebase will someday be found by someone with worse intentions and better patience.

There is humor in it, but it is the dark, professional kind. Security people joke because the alternative is staring blankly into the middle distance while whispering “why is that shell command built from a URI?” for three straight days. You laugh when a supposedly hardened enterprise stack is undone by a classic command execution pattern. You laugh when an “approved integration” turns out to be the attacker’s favorite costume. You laugh because nobody wants to say out loud that the internet still runs on a heroic amount of optimism, duct tape, and commented-out regret.

And yet, buried inside weeks like this, there is something useful. The patterns become impossible to ignore. Identity is not a support function anymore; it is a primary battlefield. Code smell is not an aesthetic complaint; it is predictive telemetry. Attack chains are not rare masterpieces; they are how modern intrusions behave when systems are too interconnected and too trusting. Once you see that clearly, the news stops feeling random. It starts feeling diagnostic.

That is why this week matters. Not because every incident was unprecedented, but because taken together they form a brutally honest picture of where security is heading. More identity abuse. More trusted-tool compromise. More exploit chaining. More pressure from compliance and regulation. More need for organizations to think less like owners of software and more like curators of trust relationships. That may not be a cheerful conclusion, but it is a practical one. And in security, practical beats cheerful every time.

Conclusion

This week in security was not really about three isolated themes. It was one story told three different ways. ID breaches showed how dangerous sensitive verification data becomes once it spreads through vendors and support layers. Code smell showed, once again, that fragile engineering habits age into exploitable security debt. Poetic flows showed how attackers chain tiny weaknesses into elegant compromise paths that feel almost inevitable in hindsight.

If there is one sentence security teams should tape to the monitor this week, it is this: the systems you trust most casually are the ones attackers study most carefully. That includes support platforms, connected apps, command handlers, parser logic, and even the assumptions you inherited from years of “temporary” software decisions. The internet does not usually collapse because of one massive, obvious mistake. It collapses because five little conveniences met each other in a dark alley and decided to collaborate.