Table of Contents >> Show >> Hide

- Scientists aren’t the problem. The “listening” part is.

- The podcast’s core point: ignoring warnings has consequences

- Why people tune out science (even when they benefit from it)

- Where ignoring scientists shows up in real life

- So… how do we actually listen to scientists?

- The payoff: listening is cheaper than regret

- Experiences from the real world: what “not listening” looks like up close (and what helps)

There’s a joke that goes something like: “Scientists warned us… and we responded by arguing in the comments section.”

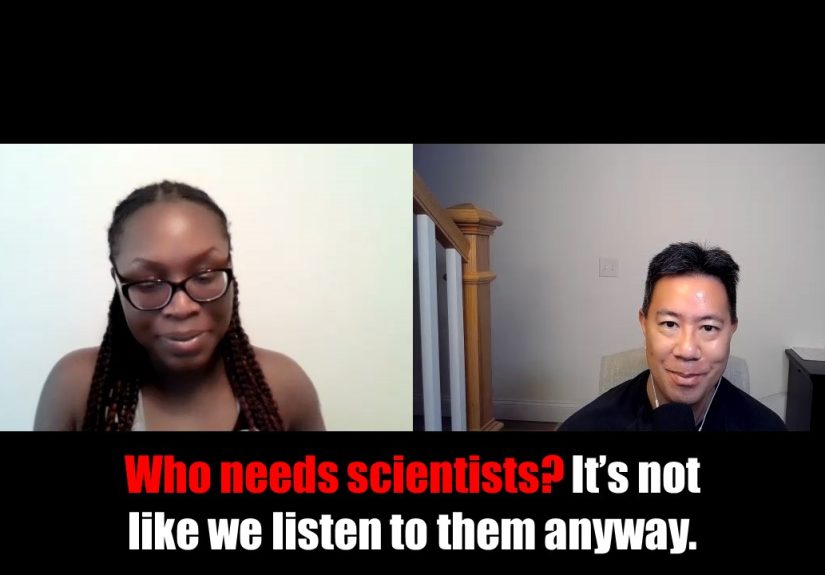

If you’ve ever listened to a health or current-events podcast and thought, “Wow, this is painfully accurate,” you’ll get the vibe of

“Who needs scientists? It’s not like we listen to them anyway.”a KevinMD podcast episode built around a companion piece by family physician Tomi Mitchell.

The title is sarcastic. The topic is not.

Because here’s the weird part: the U.S. runs on science the way your phone runs on electricityquietly, constantly, and with complete panic when it stops.

We trust science to keep airplanes up, keep food from turning into biology experiments, keep hospitals from operating like medieval reenactments,

and keep hurricanes from being complete surprise guests at the coast. And yet, when scientists deliver bad newsabout a virus, a heat wave, or the long-term

math of fossil fuelsmany of us suddenly develop a passionate belief in “doing our own research,” typically on the one website that sells supplements shaped like

gummy bears.

This article breaks down the big question behind the podcast’s punchline: Why don’t we listen to scientistsand what would it take to change that?

We’ll look at trust, misinformation, identity, incentives, and real-world consequences. And we’ll end with practical ways to “listen” without turning life into a

never-ending lecture from a lab coat.

Scientists aren’t the problem. The “listening” part is.

When people say “listen to scientists,” it can sound like “obey scientists,” which triggers a very American reflex: “Don’t tell me what to do.”

But listening isn’t obedience. It’s a way of taking evidence seriouslyespecially when the topic involves shared risk (public health), shared infrastructure (energy),

or shared reality (climate).

Scientists do three boring-but-crucial things that make modern life possible:

- Measure what’s happening (data, trends, risk).

- Test what might be true (experiments, trials, peer review).

- Revise when new evidence shows up (the feature people mistake for “flip-flopping”).

That last partrevisionis where science looks weak to the public but is actually strong. Science is designed to be corrigible. It updates.

The internet, meanwhile, is designed to be confident.

The podcast’s core point: ignoring warnings has consequences

In the KevinMD post that inspired the episode, Mitchell uses sharp humor to highlight something uncomfortable:

scientists have been warning about major threats for decades (think climate change), and Americans still treat prevention like an optional app update.

She also draws a line between dismissing climate science and dismissing public health guidanceespecially during COVID-19where misinformation and politicization

turned basic risk-reduction into a cultural cage match.

The point isn’t that scientists are always right about everything. The point is that ignoring the best available evidence is not a strategy.

It’s a vibe. And vibes don’t lower sea levels, reduce smoke exposure, or stop a contagious disease.

Why people tune out science (even when they benefit from it)

1) The misinformation firehose never shuts off

The U.S. Surgeon General has described health misinformation as a serious public health threat and called for a “whole-of-society” response.

That’s not dramatic language. That’s the federal equivalent of “this is why we can’t have nice things.”

Polling backs up the concern: large majorities of Americans say false and inaccurate information about health is a major problem, and many report encountering specific

false claims. In other words, misinformation isn’t a niche hobby. It’s background noiselike hold music, but with worse outcomes.

2) “Science” got mixed up with “teams”

People don’t absorb information in a vacuum; they absorb it inside identities: political, cultural, religious, regional, and personal.

When a scientific issue becomes a symbol of “their side vs. my side,” evidence gets evaluated like sports commentary:

it’s not about what happenedit’s about who you want to win.

Survey research in the U.S. shows that confidence in scientists remains relatively high overall, but it’s also polarized. Trust dips and rises over time,

and partisan gaps matter. That means “listen to scientists” can land very differently depending on who a person thinks is speaking for science.

3) The scientific process is unintuitive (and badly marketed)

Science is slow, probabilistic, and allergic to certainty. It speaks in confidence intervals.

Social media speaks in absolutes. Your uncle’s group chat speaks in all caps.

The National Academies has emphasized that effective science communication isn’t just dumping facts into the public square like you’re feeding pigeons.

It’s about audience values, trust, messengers, and context. Translation matters. Framing matters. Empathy matters.

4) People trust people more than institutions

On topics like vaccines, research repeatedly shows a consistent pattern: trusted health professionals and local relationships matter.

That’s why the CDC emphasizes that trust is built through conversationsbetween patients, parents, clinicians, and communitiesnot through top-down pronouncements.

If your only “science communicator” is a headline, you’re in trouble. If it’s a clinician you know, you’re in a much better place.

5) Bad incentives reward hot takes, not careful truth

Scientists get rewarded for being careful. Platforms get rewarded for being clickable. Politics gets rewarded for being decisive.

Those incentives collide in public. The result is a marketplace where nuance loses to certainty, and careful updates look like weakness.

Where ignoring scientists shows up in real life

Case study: climate change and the “future” that arrived early

NASA and NOAA describe climate change in blunt terms: warming increases the odds and intensity of certain extreme events and contributes to impacts like longer fire seasons,

heavier rainfall in some places, stronger heat extremes, and higher risks to health and infrastructure. The CDC also outlines how climate-driven hazardsheat, wildfire smoke,

flooding, and shifts in infectious disease patternsaffect health.

Meanwhile, public opinion is complicated: many Americans say global warming is happening, and surveys track beliefs and policy preferences down to the county level.

But belief doesn’t automatically translate into action, and action doesn’t automatically translate into policy that matches the scale of the problem.

The gap between “I believe” and “we did something” is where the podcast’s sarcasm becomes a warning label.

Case study: COVID-19, vaccines, and the cost of confusion

COVID made a hard truth visible: public health is a group project. If enough people opt out, the group grade tanks.

During the pandemic, the U.S. watched basic factstransmission, masks, vaccines, risk by age and comorbiditiesget dragged into ideological fights.

Health misinformation didn’t just confuse people; it undermined trust, slowed uptake of protective behaviors, and made it harder for communities to respond.

Vaccine safety monitoring and risk communication also became a test of whether the public understands how science works.

Real safety systems track side effects, update guidance, and refine warningsbecause science is supposed to adjust as more data comes in.

That’s not scandal; that’s the system doing its job.

So… how do we actually listen to scientists?

Step 1: Separate “science” from “policy”

Science can tell us what’s likely to happen under certain conditions (risk). Policy decides what we do about it (values, tradeoffs, money, feasibility).

Confusing the two makes people feel manipulated: “They’re using science to control us.”

A clearer framing is: science informs choices; it doesn’t replace them.

Step 2: Use trusted messengers and local context

The best message in the world fails if the messenger has zero trust. Clinicians, pharmacists, nurses, community leaders, and local public health workers

are often more persuasive than distant authorities. Listening scales when trust is personal.

Step 3: Make communication humanwithout dumbing it down

Communication research and professional groups have been pushing the same idea: facts matter, but stories help people understand why facts matter.

AAMC messaging around restoring trust in science emphasizes storytelling for a reasonit connects information to lived priorities:

kids, costs, safety, dignity, and the future.

Step 4: Upgrade your “information diet”

You don’t need a PhD to vet claims, but you do need a process. Here’s a simple one:

- Source check: Is this coming from a credible public health agency, major medical center, or peer-reviewed journal?

- Consensus check: Do multiple independent expert groups broadly agree?

- Evidence check: Are there data, methods, and limitsor just vibes and screenshots?

- Incentive check: Is someone selling a miracle product, political outrage, or a personal brand?

- Update check: Is this new information, or recycled content that’s out of date?

Listening to scientists doesn’t mean believing every claim instantly. It means weighting evidence appropriately and being skeptical in the right direction:

skeptical of viral certainty, not of careful work.

The payoff: listening is cheaper than regret

A lot of the podcast’s sting comes from a simple truth: prevention feels expensive until the bill arrives for ignoring it.

It costs money to improve ventilation, plan for heat, modernize the grid, strengthen flood defenses, and fund public health.

It costs more to rebuild, treat, evacuate, and mourn.

Scientists aren’t asking to run your life. They’re asking you to notice reality before reality starts leaving voicemails.

And yes, they’d like you to stop treating “peer review” like it’s a suspicious restaurant rating.

Experiences from the real world: what “not listening” looks like up close (and what helps)

To make this topic less abstract, here are a few composite, real-to-life scenariosbased on common patterns clinicians, teachers, and families describewhere the

“we don’t listen to scientists” problem shows up in everyday moments.

Experience #1: The clinic conversation that starts with a meme.

A patient sits down, pulls out a phone, and says, “Doc, is this true?” The screen shows a screenshot of a screenshot of a post from someone’s cousin’s barber’s

podcast. The claim is alarming, oddly specific, and mysteriously unsupported by anything resembling a study. The clinician takes a breath, because the goal isn’t to

“win.” The goal is to keep a relationship strong enough that the patient will keep asking questions instead of disappearing into algorithmic wilderness.

What helps is not mockery, but a calm walkthrough: what we know, what we don’t, what the risks are, and where trustworthy updates live. That conversation is science

communication in its natural habitat: respectful, practical, and human.

Experience #2: The family group chat, now featuring epidemiology.

Somebody posts an article with a headline that screams like it stubbed its toe. Another person replies with, “I’m just asking questions.”

A third person says, “You can’t trust anyone.” Suddenly the chat is a small-scale model of the internet, except with more emotional attachment and worse punctuation.

What tends to work isn’t dropping a pile of links like you’re feeding a paper shredder. It’s asking: “What would change your mind?” and “What kind of evidence would

you accept?” Sometimes the answer is “nothing,” but often the answer is “I’m scared,” “I’m tired,” or “I don’t know who to trust anymore.”

When fear is the fuel, facts alone don’t steer.

Experience #3: The weather that doesn’t match the old mental model.

People notice patterns: “It never used to get this hot,” “fire season feels longer,” “storms are weirder,” “my allergies are worse.”

Then the conversation veers into labels: climate change, politics, identity. One person wants data; another wants to avoid conflict.

A productive turn is to anchor to shared goals: protecting kids from heat illness, reducing smoke exposure, keeping the power on, lowering insurance shocks.

You don’t have to start with ideology. You can start with the lived experience and connect it to trusted sources that explain mechanisms and risk.

Experience #4: The moment someone thinks science should be “certain.”

A person hears updated guidance and concludes, “They lied.” But science didn’t lie; it learned.

That misunderstanding is fixable when communicators say the quiet part out loud: early data are incomplete; recommendations are made under uncertainty;

updates happen because more evidence arrives. When people understand that updates are normal, they stop interpreting every change as a conspiracy plot twist.

Experience #5: The surprising power of a trusted messenger.

A neighbor, pastor, coach, nurse, or pharmacist explains something in plain language, without contempt. The listener nods.

Not because the explanation was “more scientific,” but because it came from someone who feels like us.

That’s the most overlooked lesson in all of this: people rarely change their minds because they got dunked on.

They change their minds when they feel safe enough to update without humiliation.

If you take one practical message from the podcast’s sarcastic title, let it be this:

listening to scientists is less about surrendering freedom and more about improving your odds.

Evidence is not a cage. It’s a flashlight. And in a world full of confident darkness, a flashlight is a pretty good deal.